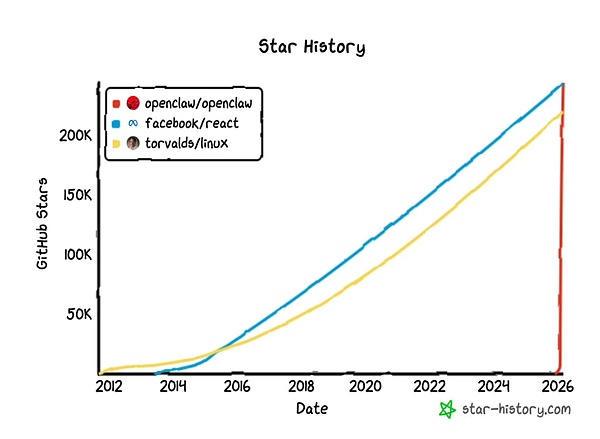

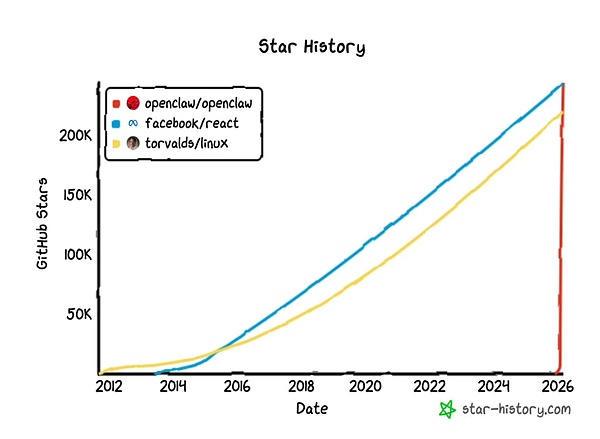

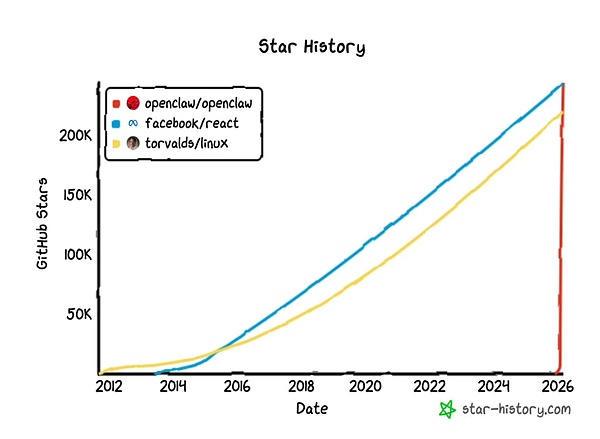

In November 2025, an Austrian independent developer, Peter Steinberger, quietly submitted a project to GitHub – Clawdbot (now renamed OpenClaw). At the time, no one paid attention, but everything spiraled out of control by the end of January 2026. Between January 29th and 30th, the project gained tens of thousands of GitHub stars in a very short time, quickly surpassing 100,000. As of March 3rd, this number had ballooned to nearly 250,000, topping the star charts and surpassing Linux. For reference, star open-source projects like React (one of the world's most popular front-end development frameworks) and Linux (the operating system kernel that supports internet servers) often take more than ten years to accumulate 200,000 stars, while OpenClaw's curve is almost a vertical line.

OpenClaw was originally named Clawdbot, a name that sounds like Claude. On January 27th, Anthropic sent a lawyer's letter forcing them to change the name. The project was transferred to Moltbot and finally settled on the name OpenClaw. However, the name change did not slow its spread; instead, it generated more buzz. On February 16th, Sam Altman announced that Steinberger had joined OpenAI, and OpenClaw would be transferred to an independent open-source foundation supported by OpenAI.

OpenClaw was originally named Clawdbot, a name that sounds like Claude. On January 27th, Anthropic sent a lawyer's letter forcing them to change the name. The project was transferred to Moltbot and finally settled on the name OpenClaw.

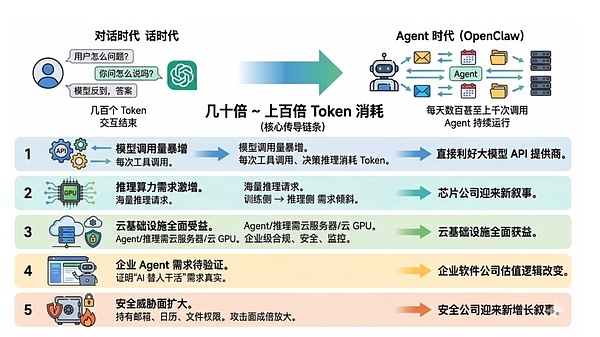

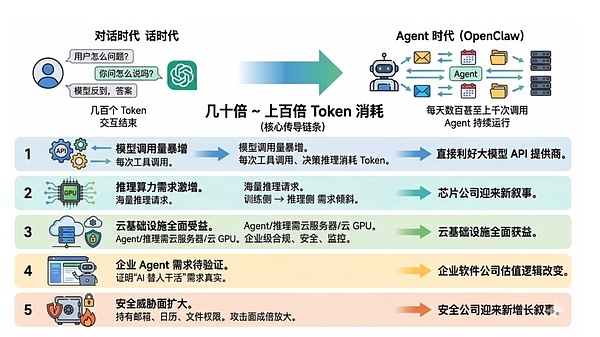

Token Killer App: The Super Flywheel for Large Model Service Providers

If Agents become the mainstream paradigm for AI interaction, the API revenue of large model providers will experience exponential growth.

However, the two largest Agent model providers, OpenAI and Anthropic, are not yet publicly listed. Therefore, the most direct IPOs corresponding to this logic in the capital market are MSFT and Google.

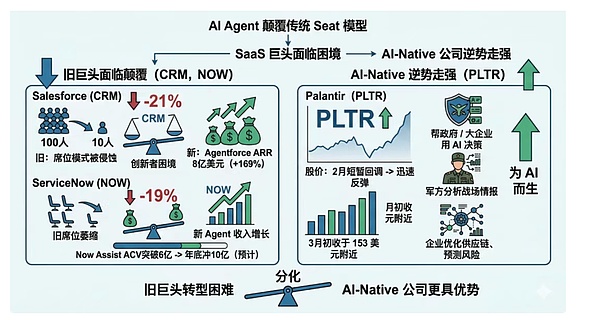

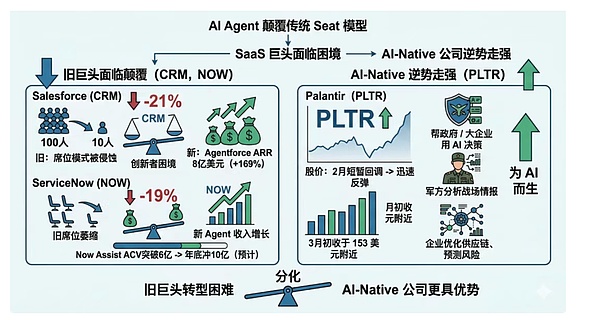

First, as OpenAI's largest external shareholder, every API request made by Microsoft through Azure OpenAI Service to call GPT-4o or o1 essentially contributes revenue to Microsoft's cloud business. The fact that the founders of OpenClaw joined OpenAI and transferred the project to the OpenAI-supported foundation means that the OpenClaw ecosystem will likely be more closely tied to OpenAI models in the future. If OpenAI becomes the top-ranked default model in OpenClaw's recommended list in the future, Microsoft will essentially gain access to a developer portal with 240,000 GitHub stars. Alphabet, on the other hand, benefits from a different dimension: the publicly traded company to which Google belongs (stock code GOOGL / GOOG). Google's Gemini series is one of the mainstream models supported by OpenClaw, and Gemini 2.0 Flash boasts highly competitive inference cost-effectiveness. More importantly, among the leading model vendors, Alphabet is one of the few AI model providers that can be directly invested in through the secondary market. Even more noteworthy is that the market currently doesn't seem to have fully priced in the agent-driven API consumption logic. GOOGL hasn't seen a significant increase since February due to OpenClaw, while MSFT has experienced a valuation correction. In other words, the expectation gap still exists, meaning the capital market is still valuing model companies using the logic of "chatbots," rather than the continuously operating Agent economy. If token consumption is the gasoline of the Agent era, then GPUs are the engine driving this machine, and the most direct beneficiaries are still GPU manufacturers NVIDIA and AMD. Over the past three years, the market's valuation logic for chip companies has primarily been based on the training side, with major manufacturers vying to purchase GPUs to train increasingly larger basic models. However, training is more of a phased investment, while inference is a continuous consumption; for example, every tool call by each agent constantly triggers new inference requests. As agents move from the laboratory to millions of users, the proportion of demand on the inference side is expected to increase significantly. This also explains NVIDIA's new narrative. If the large-scale single-order growth on the training side slows down, what can sustain GPU demand? The Agent's answer is the continued increase in inference volume. NVIDIA's latest financial report shows that Q4 2026 revenue increased by 73% year-on-year, indicating strong demand. The rise of the Agent paradigm provides a more sustainable underlying explanation for this strength. Let's look at AMD. On February 4th, AMD's stock price plummeted 17% due to its Q1 financial report falling short of expectations, triggering widespread market panic. However, just 20 days later, Meta announced a $60 billion (5-year) AI chip supply agreement with AMD, along with warrants for up to 160 million shares, approximately 10%, which seems more like a deep strategic partnership. Why does Meta need so much inference computing power? Because it's pursuing so-called personal superintelligence, and realizing this vision requires a massive number of agents running continuously in the background. OpenClaw is validating not just a product direction, but the entire logic of the agent's need for massive computing power. Therefore, the growth in inference demand driven by agents will first be transmitted to the computing power layer, with NVDA and AMD being the core targets. Among companies that continuously consume computing power at the application layer, Meta may also become an important driver of demand. The True Carrier of Agent Scalability: Cloud Computing As mentioned earlier, GPUs are the engine of the Agent era; cloud computing platforms are the infrastructure for the long-term operation of these agents. From a capital market perspective, the core targets in this chain are the three major cloud platforms AMZN, MSFT, and GOOGL. Further upstream in the data center infrastructure layer, EQIX and DLR may also be indirect beneficiaries. While OpenClaw touts local deployment, the reality is that due to security and permission issues, most users won't run an AI Agent on their laptops 24/7. Whether for individuals or enterprises, the ultimate goal of large-scale deployment is likely cloud deployment. Alibaba Cloud and Tencent Cloud have already launched one-click deployment services in the Chinese market, which indirectly confirms the genuine demand. Furthermore, there's an easily overlooked detail: the value of agents to the cloud isn't just computing power, but also long-tail inference traffic. AI training orders are characterized by "large clients + large orders + periodicity," while agent inference is driven by "a large number of small clients + high-frequency calls + continuous revenue"—a business model that cloud vendors prefer. Globally, the three major cloud vendors each possess unique advantages. AWS, as the world's largest cloud platform, supports multiple model APIs on its Bedrock platform, making it a common deployment environment for developers. Azure benefits from both model APIs and cloud infrastructure, with Azure OpenAI Service's exclusive GPT access capabilities further amplified in agent scenarios. Google Cloud's differentiation lies in its cost structure. The inference price of models like Gemini Flash is significantly lower than that of many flagship models. In scenarios requiring long-term Agent operation and token consumption, this price difference will be rapidly amplified. Another point worth noting is that if Agents operate at scale, the computing power demands of cloud providers will ultimately translate into data center construction, potentially indirectly benefiting Equinix and Digital Realty. The popularity of OpenClaw validates a trend: people are willing to let AI do the work for them, not just chat with them. However, for the traditional enterprise software sector, this is seen by the market as the prelude to the "SaaSpocalypse" (the end of SaaS). At the start of 2026, SaaS giants collectively faced pressure: Salesforce fell 21% year-to-date, and ServiceNow fell 19%. The root of this panic lies in a structural game between agents and software. In the past, to command a system, we needed a software interface; now, agents can directly invoke the system to complete tasks, and the software's own presence is being diminished. This change brings two fundamental problems. First, the impact of AI is not limited to the "per-user" model, but affects the entire software value chain. For example, Adobe's stock price plummeted from a high of $699.54 to $264.04, a drop of 62%; education software company Chegg crashed from $115.21 to $0.44, almost to zero; and tax software giant Intuit also plunged 16% in a week in January 2026. The market's concern isn't about a particular pricing model being disrupted, but rather that generative AI tools (such as Anthropic) are automating core enterprise workflows, reducing reliance on traditional software functions, thus permanently compressing the revenue potential of the entire SaaS platform. Secondly, the more powerful the agent, the more vulnerable the traditional business model becomes. For example, Microsoft is eroding ServiceNow's pricing power and slowing down new customer acquisition through its "Agent 365" bundling strategy. A simple deduction is enough to send chills down investors' spines: if one AI agent can do the work of 100 employees, is it still necessary for a company to buy 100 software seats? OpenClaw's mainstream success is essentially accelerating the realization of this logic. Of course, the giants haven't sat idly by. Salesforce's AgentForce has achieved $800 million in ARR, a year-on-year increase of 169%; ServiceNow's Now Assist annual contract value has exceeded $600 million, and is expected to reach $1 billion by the end of the year. But it's never easy for giants to dance; they're caught in the classic innovator's dilemma: new agent revenue is growing, but old seat revenue is shrinking, and the outcome of this two-pronged race remains unclear. For CRM and NOW, the core contradiction is – can the incremental growth of agents fill the gap in the seat model? The market has already voted with its feet to provide the answer. Meanwhile, Palantir tells a completely different story. This company focuses on helping governments and large enterprises make critical decisions using AI: the military uses it to analyze battlefield intelligence, and businesses use it to optimize supply chains, predict risks, and deploy AI in the most complex and sensitive business scenarios. After a brief pullback in February, PLTR quickly rebounded, stabilizing around $153 in early March. While the SaaS sector was battered by the "SaaS doomsday" narrative, Palantir bucked the trend and strengthened. This divergence may mean that the winners of the Agent era may not be the fastest-transforming old giants, but rather companies born for AI from the outset.

The hidden benefits of security companies

JinseFinance

JinseFinance